- Image by cameronneylon via Flickr

I’ve largely stolen the title of this post from Daniel Mietchen because I it helped me to frame the issues. I’m giving an informal talk this afternoon and will, as I frequently do, use this to think through what I want to say. Needless to say this whole post is built to a very large extent on the contributions and ideas of others that are not adequately credited in the text here.

If we imagine what the specification for building a scholarly communications system would look like there are some fairly obvious things we would want it to enable. Registration of ideas, data or other outputs for the purpose of assigning credit and priority to the right people is high on everyone’s list. While researchers tend not to think too much about it, those concerned with the long term availability of research outputs would also place archival and safekeeping high on the list as well. I don’t imagine it will come as any surprise that I would rate the ability to re-use, replicate, and re-purpose outputs very highly as well. And, although I won’t cover it in this post, an effective scholarly communications system for the 21st century would need to enable and support public and stakeholder engagement. Finally this specification document would need to emphasise that the system will support discovery and filtering tools so that users can find the content they are looking for in a huge and diverse volume of available material.

So, filtering, archival, re-usability, and registration. Our current communications system, based almost purely on journals with pre-publication peer review doesn’t do too badly at archival although the question of who is actually responsible for actually doing the archival, and hence paying for it doesn’t always seem to have a clear answer. Nonetheless the standards and processes for archiving paper copies are better established, and probably better followed in many cases, than those for digital materials in general, and certainly for material on the open web.

The current system also does reasonably well on registration, providing a firm date of submission, author lists, and increasingly descriptions of the contributions of those authors. Indeed the system defines the registration of contributions for the purpose of professional career advancement and funding decisions within the research community. It is a clear and well understood system with a range of community expectations and standards around it. Of course this is circular as the career progression process feeds the system and the system feeds career progression. It is also to some extent breaking down as wider measures of “impact” become important. However for the moment it is an area where the incumbent has clear advantages over any new system, around which we would need to grow new community standards, expectations, and norms.

It is on re-usability and replication where our current system really falls down. Access and rights are a big issue here, but ones that we are gradually pushing back. The real issues are much more fundamental. It is essentially assumed, in my experience, by most researchers that a paper will not contain sufficient information to replicate an experiment or analysis. Just consider that. Our primary means of communication, in a philosophical system that rests almost entirely on reproducibility, does not enable even simple replication of results. A lot of this is down to the boundaries created by the mindset of a printed multi-page article. Mechanisms to publish methods, detailed laboratory records, or software are limited, often leading to a lack of care in keeping and annotating such records. After all if it isn’t going in the paper why bother looking after it?

A key advantage of the web here is that we can publish a lot more with limited costs and we can publish a much greater diversity of objects. In principle we can solve the “missing information” problem by simply making more of the record available. However those important pieces of information need to be captured in the first place. Because they aren’t currently valued, because they don’t go in the paper, they often aren’t recorded in a systematic way that makes it easy to ultimately publish them. Open Notebook Science, with its focus on just publishing everything immediately, is one approach to solving this problem but it’s not for everyone, and causes its own overhead. The key problem is that recording more, and communicating it effectively requires work over and above what most of us are doing today. That work is not rewarded in the current system. This may change over time, if as I have argued we move to metrics based on re-use, but in the meantime we also need much better, easier, and ideally near-zero burden tools that make it easier to capture all of this information and publish it when we choose, in a useful form.

Of course, even with the perfect tools, if we start to publish a much greater portion of the research record then we will swamp researchers already struggling to keep up. We will need effective ways to filter this material down to reduce the volume we have to deal with. Arguably the current system is an effective filter. It almost certainly reduces the volume and rate at which material is published. Of all the research that is done, some proportion is deemed “publishable” by those who have done it, a small portion of that research is then incorporated into a draft paper and some proportion of those papers are ultimately accepted for publication. Up until 20 years ago where the resource pinch point was the decision of whether or not to publish something this is exactly what you would want. The question of whether it is an effective filter; is it actually filtering the right stuff out, is somewhat more controversial. I would say the evidence for that is weak.

When publication and distribution was the expensive part that was the logical place to make the decision. Now these steps are cheap the expensive part of the process is either peer review, the traditional process of making a decision prior to publication, or conversely, the curation and filtering after publication that is seen more widely on the open web. As I have argued I believe that using the patterns of the web will be ultimately a more effective means of enabling users to discover the right information for their needs. We should publish more; much more and much more diversely but we also need to build effective tools for filtering and discovering the right pieces of information. Clearly this also requires work, perhaps more than we are used to doing.

An imaginary solution

So what might this imaginary system that we would design look like. I’ve written before about both key aspects of this. Firstly I believe we need recording systems that as far as possible record and publish both the creation of objects, be they samples, data, or tools. As far as possible these should make a reliable, time stamped, attributable record of the creation of these objects as a byproduct of what the researcher needs to do anyway. A simple concept for instance is a label printer that, as a byproduct of printing off a label, makes a record of who, what, and when, publishing this simultaneously to a public or private feed.

Publishing rapidly is a good approach, not just for ideological reasons of openness but also some very pragmatic concerns. It is easier to publish at the source than to remember to go back and do it later. Things that aren’t done immediately are almost invariably forgotten or lost. Secondly rapid publication has the potential to both efficiently enable re-use and to prevent scooping risks by providing a time stamped citable record. This of course would require people to cite these and for those citations to be valued as a contribution; requiring a move away from considering the paper as the only form of valid research output (see also Michael Nielsen‘s interview with me).

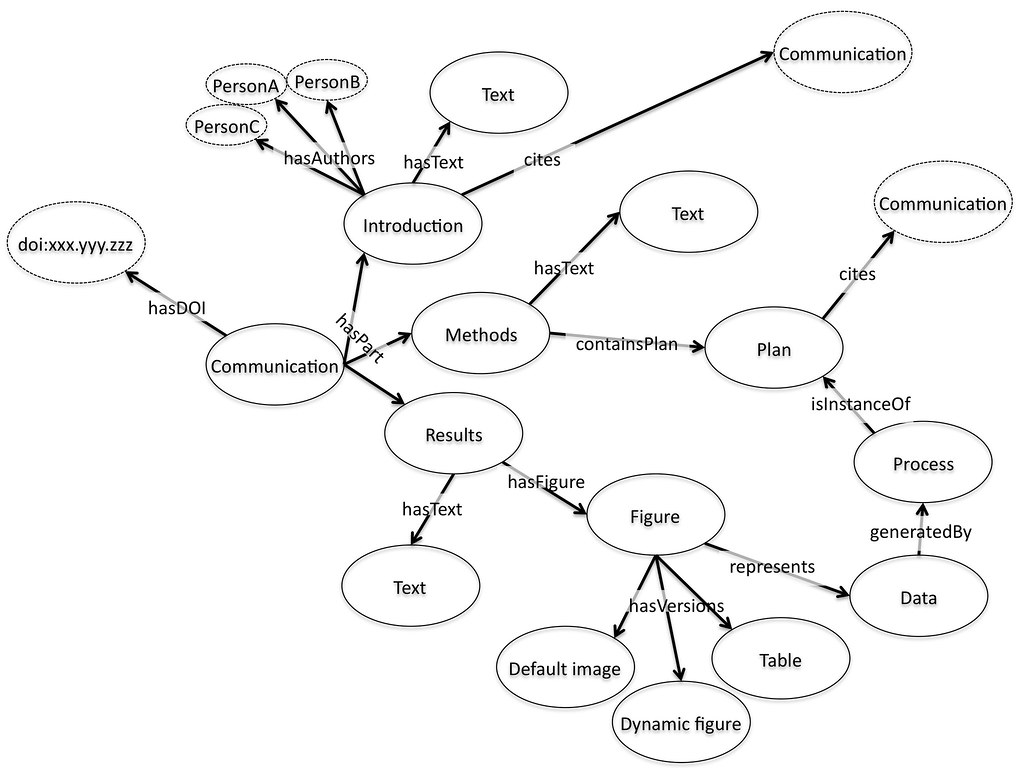

It isn’t enough though, just to publish the objects themselves. We also need to be able to understand the relationship between them. In a semantic web sense this means creating the links between objects, recording the context in which they were created, what were their inputs and outputs. I have alluded a couple of times in the past to the OREChem Experimental Ontology and I think this is potentially a very powerful way of handling these kind of connections in a general way. In many cases, particularly in computational research, recording workflows or generating batch and log files could serve the same purpose, as long as a general vocabulary could be agreed to make this exchangeable.

As these objects get linked together they will form a network, both within and across projects and research groups, providing the kind of information that makes Google work, a network of citations and links that make it possible to directly measure the impact of a single dataset, idea, piece of software, or experimental method through its influence over other work. This has real potential to help solve both the discovery problem and the filtering problem. Bottom line, Google is pretty good at finding relevant text and they’re working hard on other forms of media. Research will have some special edges but can be expected in many ways to fit patterns that mean tools for the consumer web will work, particularly as more social components get pulled into the mix.

On the rare occasions when it is worth pulling together a whole story, for a thesis, or a paper, authors would then aggregate objects together, along with text and good visuals to present the data. The telling of a story then becomes a special event, perhaps one worthy of peer review in its traditional form. The forming of a “paper” is actually no more than providing new links, adding grist to the search and discovery mill, but it can retain its place as a high status object, merely losing its role as the only object worth considering.

So in short, publish fragments, comprehensively and rapidly. Weave those into a wider web of research communication, and from time to time put in the larger effort required to tell a more comprehensive story. This requires tools that are hard to build, standards that are hard to agree, and cultural change that at times seems like spitting into a hurricane. Progress is being made, in many places and in many ways, but how can we take this forward today?

Practical steps for today

I want to write more about these ideas in the future but here I’ll just sketch out a simple scenario that I hope can be usefully implemented locally but provide a generic framework to build out without necessarily requiring a massive agreement on standards.

The first step is simple, make a record, ideally an address on the web for everything we create in the research process. For data and software just the files themselves, on a hard disk is a good start. Pushing them to some sort of web storage, be it a blog, github, an institutional repository, or some dedicated data storage service, is even better because it makes step two easy.

Step two is to create feeds that list all of these objects, their addresses and as much standard metadata as possible, who and when would be a good start. I would make these open by choice, mainly because dealing with feed security is a pain, but this would still work behind a firewall.

Step three gets slightly harder. Where possible configure your systems so that inputs can always be selected from a user-configurable feed. Where possible automate the pushing of outputs to your chosen storage systems so that new objects are automatically registered and new feeds created.

This is extraordinarily simple conceptually. Create feeds, use them as inputs for processes. It’s not so straightforward to build such a thing into an existing tool or framework, but it doesn’t need to be too terribly difficult either. And it doesn’t need to bother the user either. Feeds should be automatically created, and presented to the user as drop down menus.

The step beyond this, creating a standard framework for describing the relationships between all of these objects is much harder. Not because its difficult, but because it requires an agreement on standards for how to describe those relationships. This is do-able and I’m very excited by the work at Southampton on the OREChem Experimental Ontology but the social problems are harder. Others prefer the Open Provenance Model or argue that workflows are the way to manage this information. Getting agreement on standards is hard, particularly if we’re trying to maximise their effective coverage but if we’re going to build a computable record of science we’re going to have to tackle that problem. If we can crack it and get coverage of the records via a compatible set of models that tell us how things are related then I think we will be will placed to solve the cultural problem of actually getting people to use them.

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=37493daa-cc68-4a8d-8b63-ddde92d6ab8f)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=219f7020-8ab0-4a39-b877-01f47779fc1b)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=4138f1d1-dfc0-48b5-ab57-04952a86db65)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=8af89e7c-59ca-438d-8e68-4efa40cffd79)