- Image by cameronneylon via Flickr

If you’ve been around either myself or Deepak Singh you will almost certainly have heard the Jeff Jonas/Jon Udell soundbite: ‘Data finds data. Then people find people’. Jonas is referring to data management frameworks and knowledge discovery and Udell is referring to the power of integrated data to bring people together.

At some level Jonas’ vision (see his chapter[pdf] in Beautiful Data) is what the semantic web ought to enable, the automated discovery of data or objects based on common patterns or characteristics. Thus far in practical terms we have signally failed to make this a reality, particularly for research data and objects.

Udell’s angle (or rather, my interpretation of his overall stance) is more linked to the social web – the discovery of common contexts through shared data frameworks. These contexts might be social groups, as in conventional social networks, a particular interest or passion, or – in the case of Jon’s championing of the iCalendar standard –  a date and place as demonstrated by the  the elmcity project supporting calendar curation and aggregation. Shared context enables the making of new connection, the creation of new links. But still mainly links between people.

It’s not the scientists who are social; it’s the data – Neil Saunders

The naïve analysis of the success of consumer social networks and the weaknesses of science communication has lead to efforts that almost precisely invert the Jonas/Udell concept. In the case of most of these “Facebooks for Scientists†the idea is that people find people, and then they connect with data through those people.

My belief is that it is this approach that has led to the almost complete failure of these networks to gain traction. Services that place the object  research at the centre; the reference management and bookmarking services, to some extent Twitter and Friendfeed, appear to gain much more real scientific use because they mediate the interactions that researchers are interested in, those between themselves and research objects. Friendfeed in particular seems to support this discovery pattern. Objects of interest are brought into your stream, which then leads to discovery of the person behind them. I often use Citeulike in this mode. I find a paper of interest, identify the tags other people have used for it and the papers that share those tags. If these seems promising, I then might look at the library of the person, but I get to that person through the shared context of the research object, the paper, and the tags around that object.

Data, data everywhere, but not a lot of links – Simon Coles

A common complaint made of research data is that people don’t make it available. This is part of the problem but increasingly it is a smaller part. It is easy enough to put data up that many researchers are doing so, in supplementary data of journal articles, on personal websites, or on community or consumer sites. From a linked data perspective we ought to be having a field day with this, even if it represents only a small proportion of the total. However little of this data is easily discoverable and most of it is certainly not linked in any meaningful way.

A fundamental problem that I feel like I’ve been banging on about for years now is that dearth of well built tools for creating these links. Finally these tools are starting to appear with Freebase Gridworks being an early example. There is a good chance that it will become easier over time for people to create links as part of the process of making their own record. But the fundamental problems we always face, that this is hard work, and often unrewarded work, are limiting progress.

Data friends data…then knowledge becomes discoverable

Human interaction is unlikely to work at scale. We are going to need automated systems to wire the web of data together. The human process simply cannot keep up with the ongoing annotation and connection of data at the volumes that are being generated today. And we can’t afford not to if we want to optimize the opportunities of research to deliver useful outcomes.

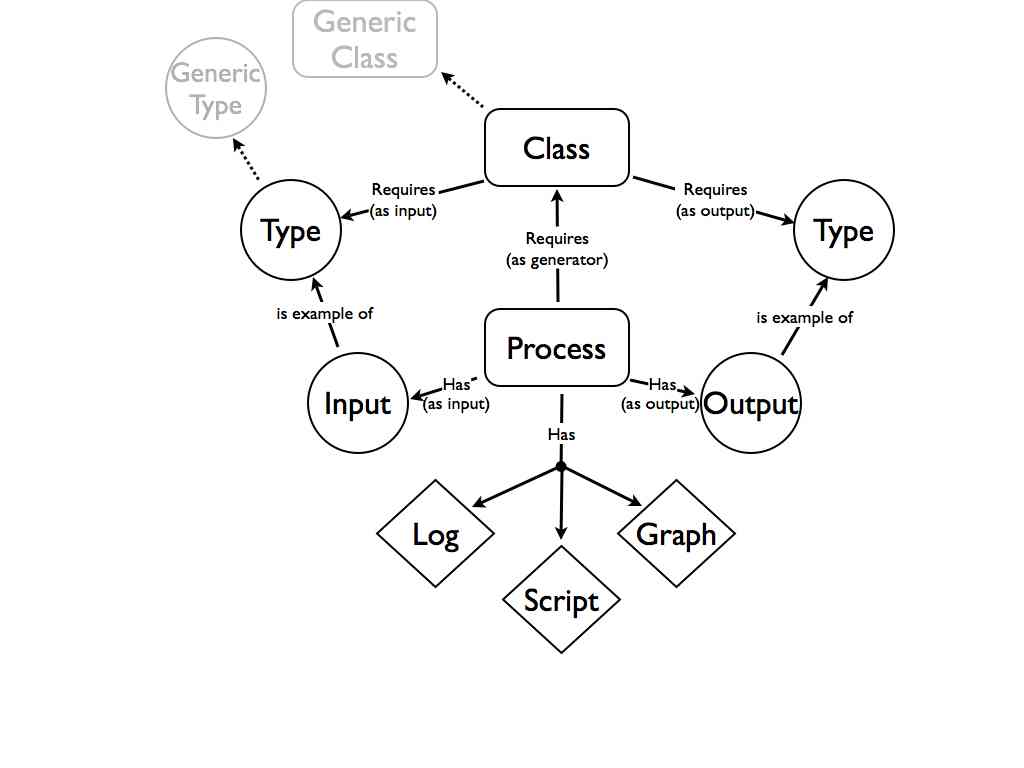

When we think about social networks we always place people at their centre. But there is nothing to stop us replacing people with data or other research objects. Software that wants to find data, data that wants to find complementary or supportive data, or wants to find the right software to convert or analyze it. Instead of Farmville or Mafia Wars imagine useful tools that make these connections, negotiate content, and identify common context. As pointed out to me by Paul Walk this is very similar to what was envisioned in the 90s as the role of software agents. In this view the human research users are the poorly connected users on the outskirts of the web.

The point is that the hard part of creating linked data is making the links, not publishing the data. The semantic web has always suffered from the chicken and egg problem of a lack of user-friendly ways to generate RDF and few tools that could really use that RDF in exciting ways even if it did exist. I still can’t do a useful search on which restaurants in Bath will be open next Sunday. The reality is that the innards of this should be hidden from the user, the making of connections needs to be automated as far as possible, and as natural as possible when the user has to be involved. As easy as hitting that “like” button, or right clicking and adding a citation.

We have learnt a lot about the principles of when and how social networks work. If we can apply those lessons to the construction of open data management and discovery frameworks then we may stand some chance of actually making some of the original vision of the web work.

Related articles by Zemanta

- Facebook and the Open Graph: good for Linked Data? (blogs.talis.com)

- Freebase Gridworks: A power tool for data scrubbers ” Jon Udell (faganm.com)

![Reblog this post [with Zemanta]](http://img.zemanta.com/reblog_e.png?x-id=37493daa-cc68-4a8d-8b63-ddde92d6ab8f)